Math for Deep Learning I

Gaining an Intuitive Mathematical Understanding of Large Models

Vectors are the building blocks of meaning in AI.

This is the first in a series of articles on the math you need if you’re working with large language models on your own machine—or if you’re transitioning from traditional development work into AI.

But unlike many tutorials, my goal here is not just to show you formulas. Instead, I want to show you why these mathematical tools matter. Knowing the mechanics is useful, but knowing why they matter gives you intuition—and intuition is what will make you an effective AI engineer.

Why Matrices Matter

Why are matrices so central to machine learning?

Because everything in modern AI—from hidden layers in neural networks, to forward and backpropagation, to how billions of parameters are represented—is built on matrix algebra.

When you train a model, you’re modifying billions of parameters. Those parameters live in matrices. The updates to those parameters during training? Also matrices. The way the model represents knowledge internally? Again, matrices.

Once you truly understand this, even your intuition about AI improves.

Take a simple example: Netflix movie recommendations. Netflix can represent your preferences as an array of movie IDs with values that measure how much you liked each one. Did you finish the movie? Did you stop after 5 minutes? Do you watch a lot of sci-fi or murder mysteries? Each of those signals becomes a number.

That list of numbers—the way Netflix represents your preferences—is a matrix. By multiplying your preference matrix with other matrices (representing other users, genres, or new movies), Netflix can predict what else you’ll like.

Amazon, Apple Music, YouTube—recommendation systems everywhere rely on this same principle: matrix multiplication.

Which is why we start here. Matrix multiplication is the single most important piece of math you’ll need to understand large language models.

Matrix Basics

What Is a Matrix?

A matrix is just an array of numbers arranged in rows and columns.

- A 1D matrix (a list of numbers) can be written as a row or as a column. Some people call rows “vectors” and columns “arrays,” but don’t get distracted by the terminology—at the end of the day, they’re just 1D matrices.

- A 2D matrix is a grid with rows and columns.

When we describe a matrix, we use its shape: (m × n), where m is the number of rows and n is the number of columns. Always rows first, columns second. (Think R-C Cola, Ray Charles, Russell Crowe… it’ll stick.)

When Can We Multiply Matrices?

You can’t multiply any two matrices arbitrarily—they must be “compatible.”

Here’s the rule:

- If matrix A has shape (m × n) and matrix B has shape (p × q), you can multiply them only if n = p.

- The resulting product has shape (m × q).

Think: inner numbers must match, outer numbers give the result.

Examples:

- (3 × 4) × (4 × 2) → valid, result is (3 × 2)

- (5 × 2) × (2 × 8) → valid, result is (5 × 8)

- (5 × 2) × (4 × 2) → not valid (2 ≠ 4)

This rule may seem trivial, but it underlies everything from image recognition to transformers. Get this wrong, and nothing works.

How to Multiply Matrices

Let’s walk through progressively more complex examples.

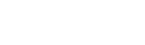

Example 1:

(1 × 2) × (2 × 1)

Shape check: inner dimensions match (2 = 2), so multiplication is valid.

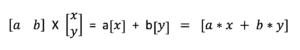

Result: (1 × 1)—just a number, also called a scalar.

Formula:

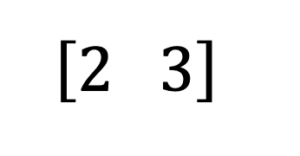

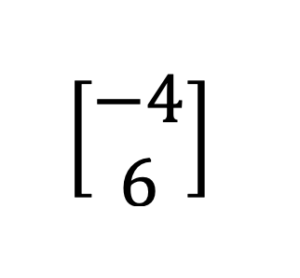

Our 1X2 array is this:

Our 2X1 array is this:

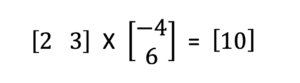

The multiplication we will do and the result are here:

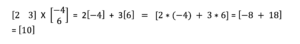

And applying the formula to our specific example is here:

That’s it: multiply corresponding elements, add them up.

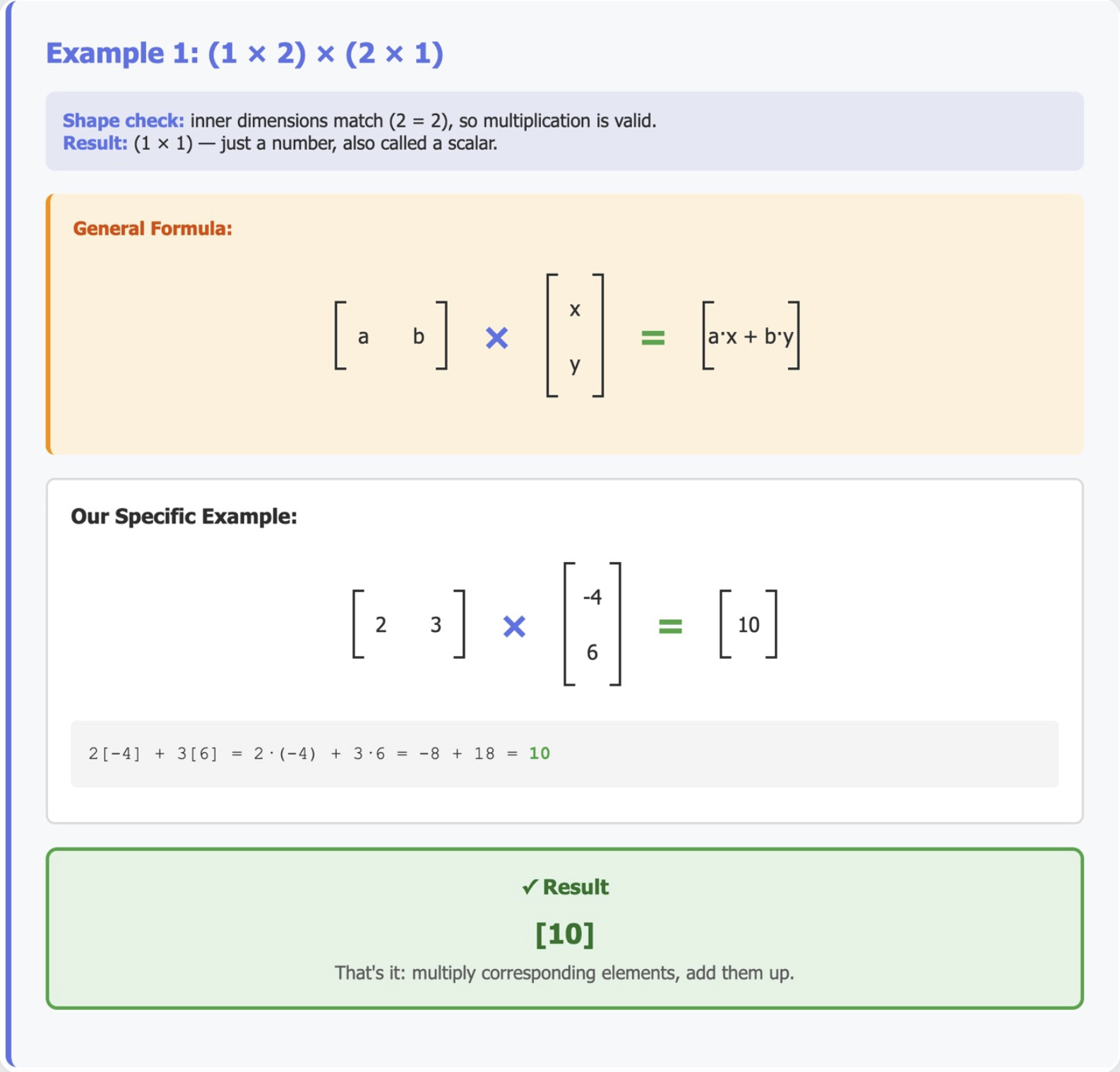

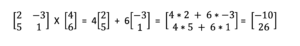

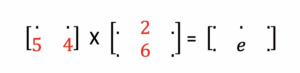

Example 2:

(2 × 2) × (2 × 1)

Shape check: inner dimensions match (2 = 2).

Result: outer dimensions show the result shape is (2 × 1)—a column vector.

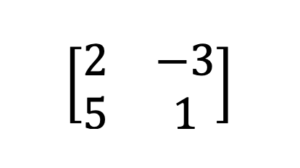

Our 2X2 array is this:

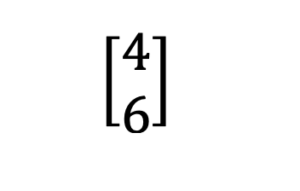

Our 2X1 array is this:

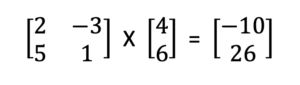

The multiplication we will do and the result are here:

The formula is here:

And applying the formula to our specific example is here:

Rule of thumb: each row of the first matrix gets dotted with the single column of the second matrix.

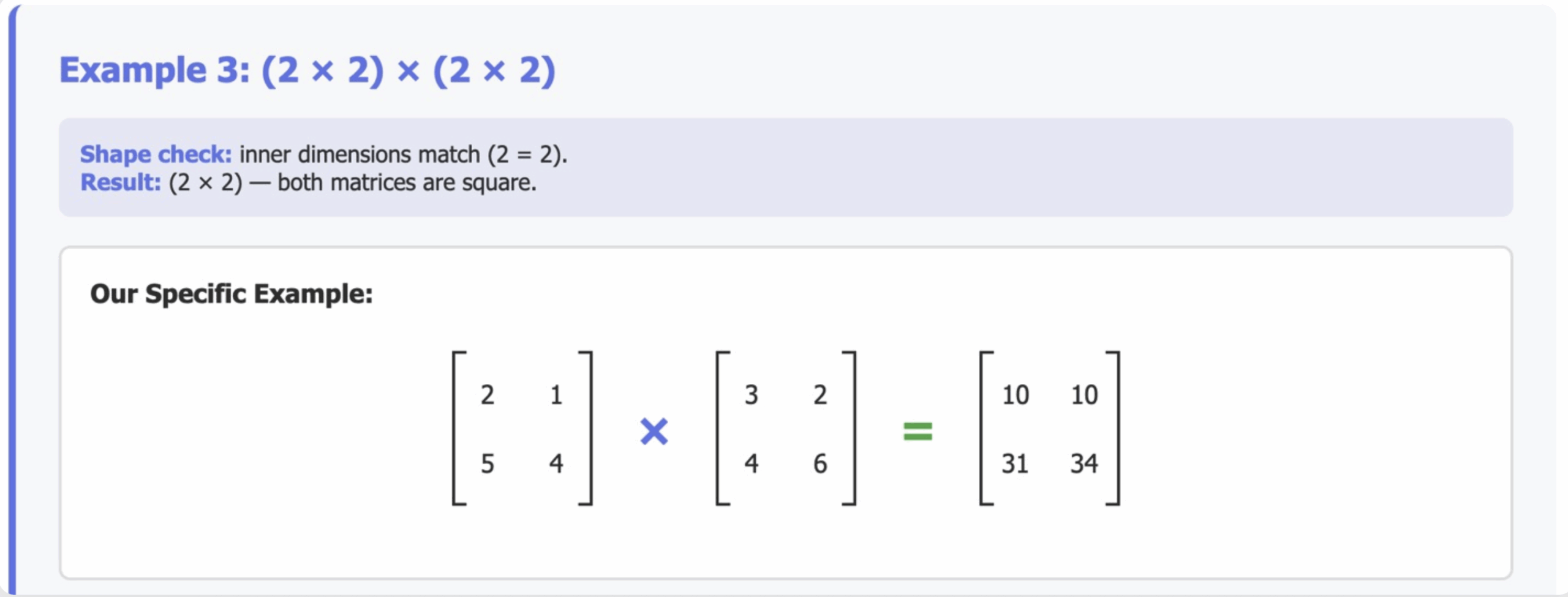

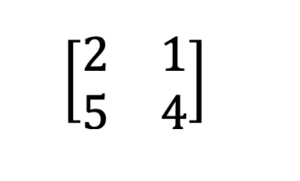

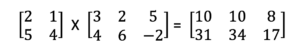

Example 3:

(2 × 2) × (2 × 2)

Now both matrices are square.

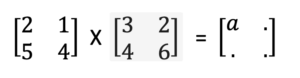

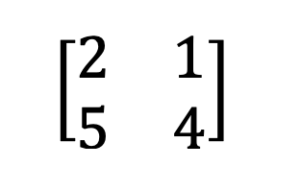

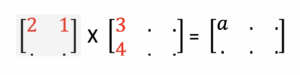

Our first 2X2 matrix is this:

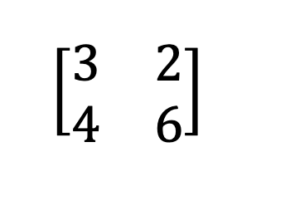

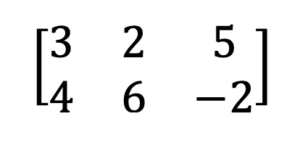

Our second 2X2 matrix is this:

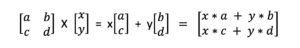

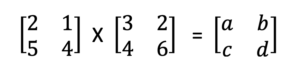

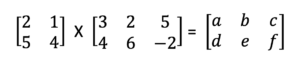

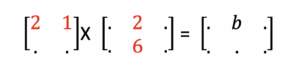

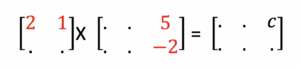

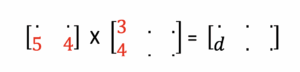

The formula is:

Where a = 2*3 + 1*4 = 6 + 4 = 10

And b = 2*2 + 1*6 = 4 + 6 = 10

And c = 5*3 + 4*4 = 15 + 16 = 31

And d = 5*2 + 4*6 = 10 + 24 = 34.

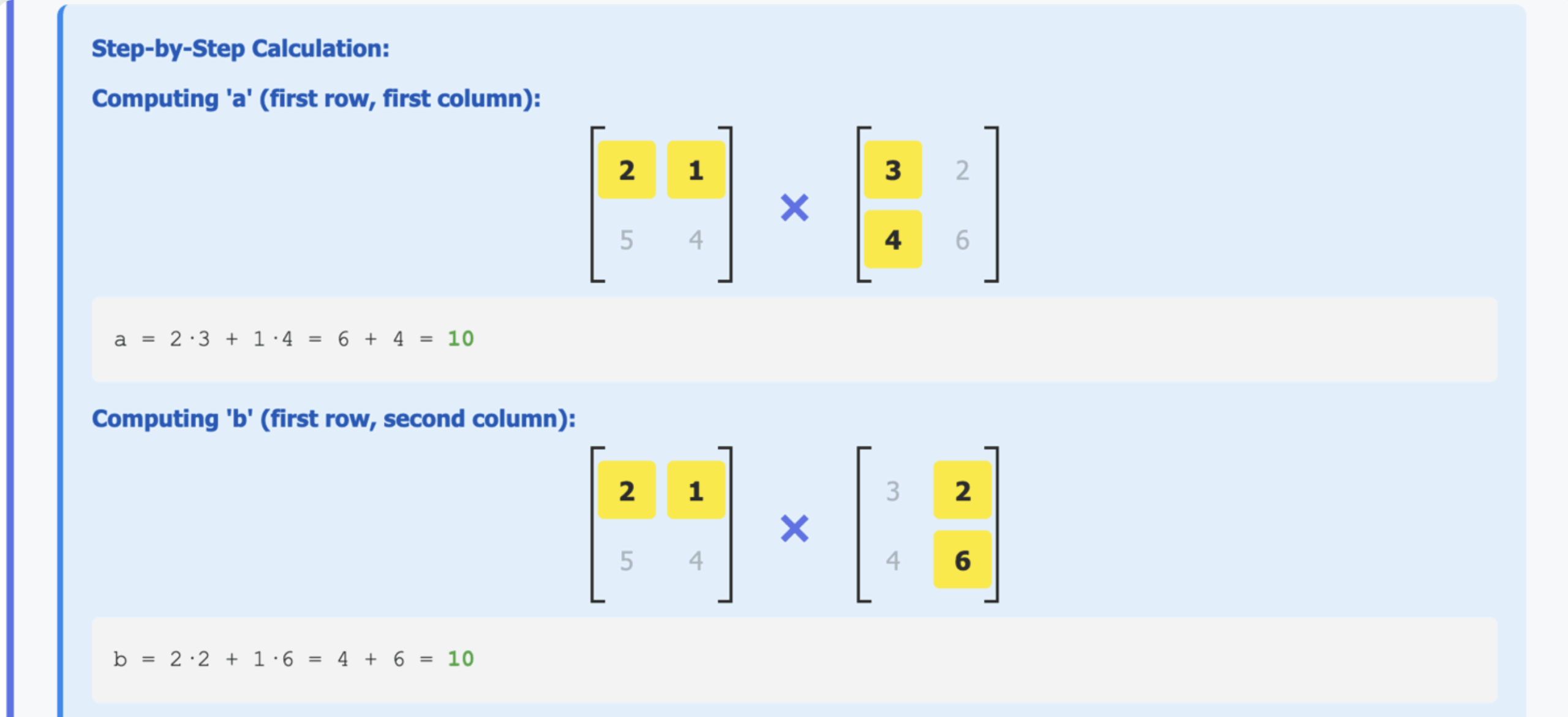

First focus on calculating the value for a:

‘a’ is in the first row of the result. And it is in the first column of the result. To get it we multiply and add the first row of the first matrix by the first column of the second matrix:

Here: a = 2*3 + 1*4 = 6 + 4 = 10.

__

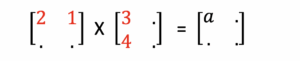

Then focus on calculating the value for b:

‘b’ is in the first row of the result. And it is in the second column of the result. To get it we multiply and add the first row of the first matrix by the second column of the second matrix:

Thus: b = 2*2 + 1*6 = 4 + 6 = 10

__

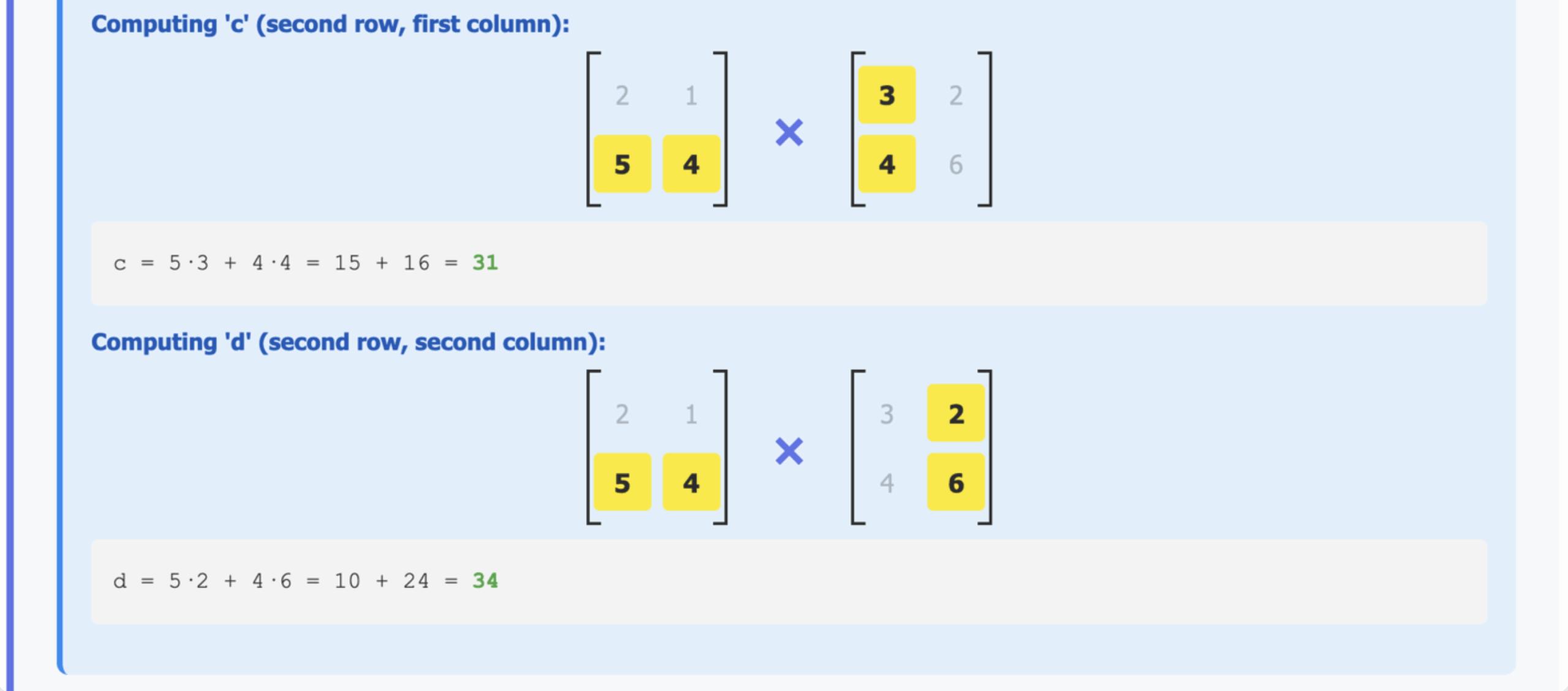

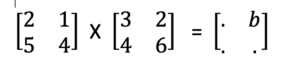

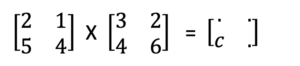

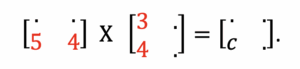

Then focus on calculating the value for c:

‘c’ is in the second row of the result. And it is in the first column of the result. To get it we multiply and add the secondrow of the first matrix by the first column of the second matrix:

c = 5*3 + 4*4 = 15 + 16 = 31.

__

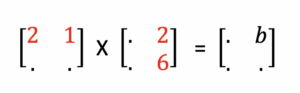

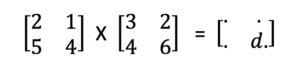

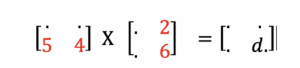

Finally focus on calculating the value for d:

‘d’ is in the first second of the result. And it is in the second column of the result. To get it we multiply and add the secondrow of the first matrix by the second column of the second matrix:

d = 5*2 + 4*6 = 10 + 24 = 34.

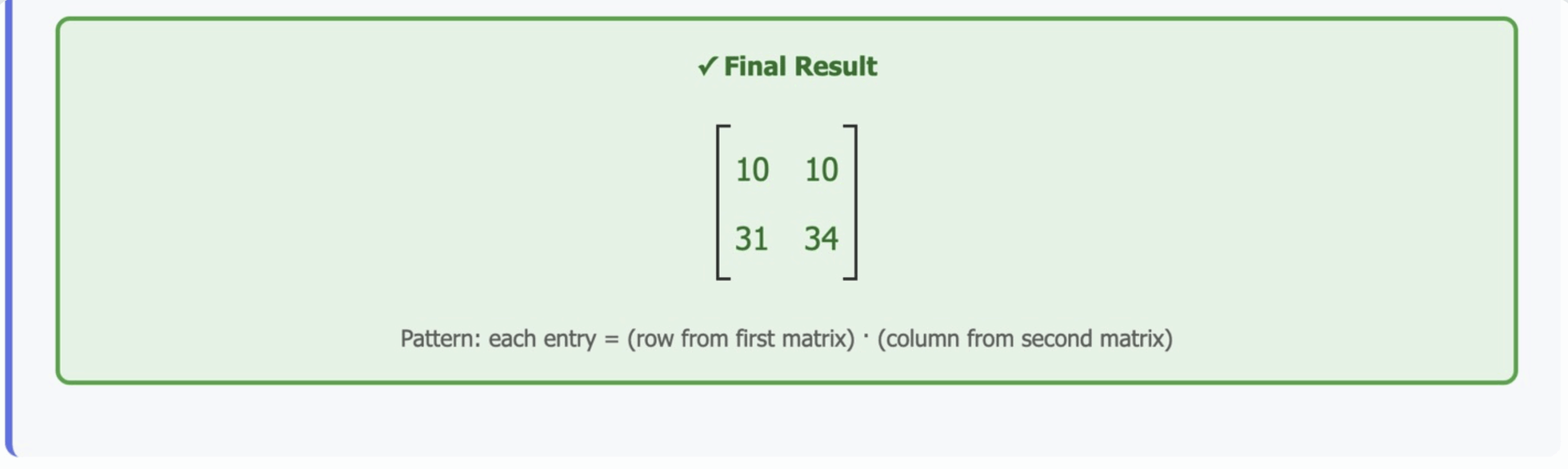

Notice the pattern:

- To get each entry in the result, take a row from the first matrix and a column from the second, multiply element by element, then sum.

- This pattern holds no matter the size of the matrices.

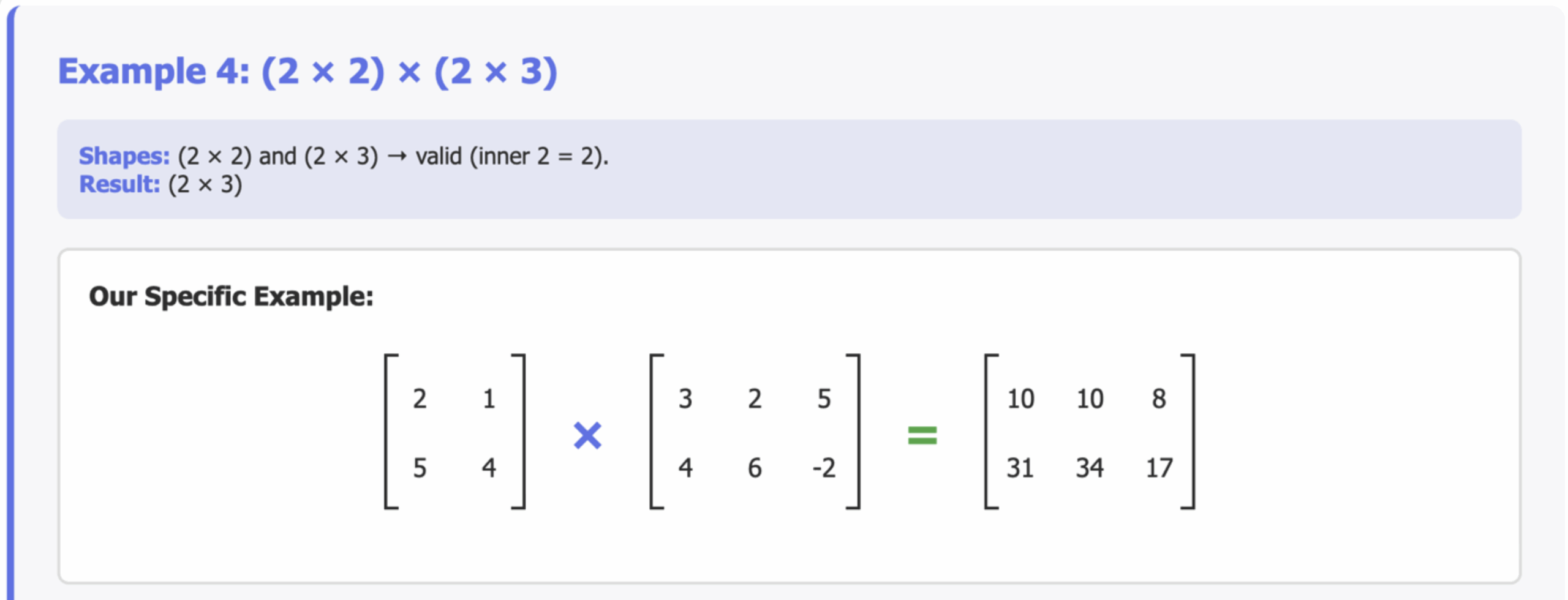

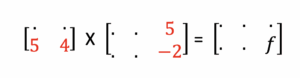

Example 4:

(2 × 2) × (2 × 3)

Shapes: (2 × 2) and (2 × 3) → valid (inner 2 = 2).

Result: (2 × 3).

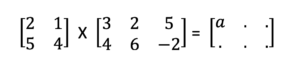

Our first matrix is this:

Our second matrix is this:

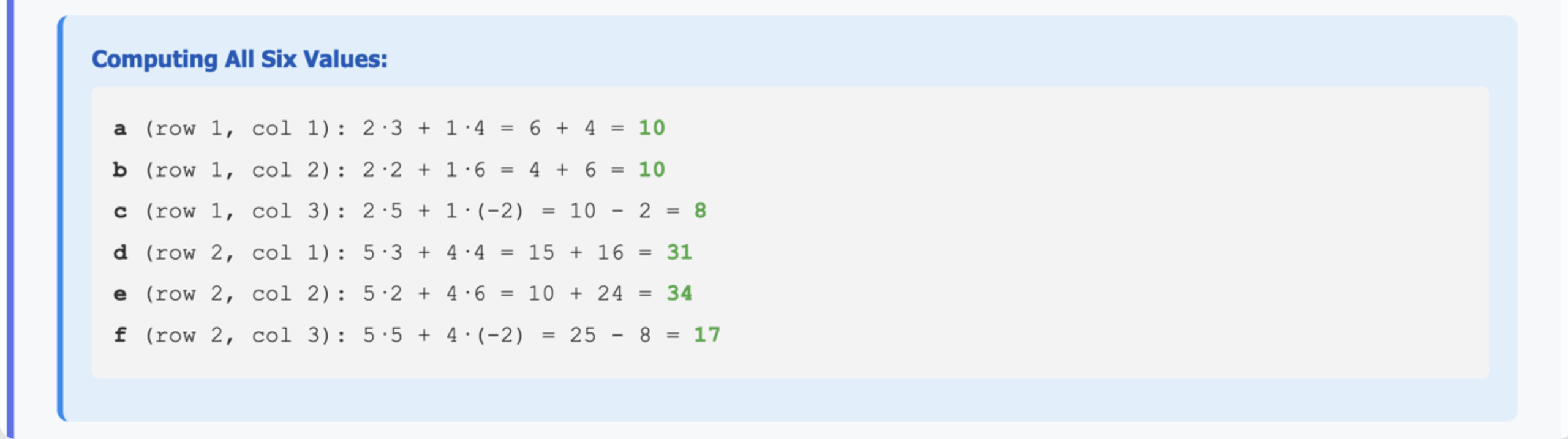

So let’s proceed to determine the 6 values in the product:

‘a’ is in the first row and first column. So we do this:

a = 2*3 + 1*4 = 6 + 4 = 10.

‘b’ is in the first row and second column. So we multiply like this:

b = 2*2 + 1*6 = 4 + 6 = 10.

‘c’ is in the first row and third column:

c = 2*5 + 1*(-2) = 10 – 2 = 8.

‘d’ is in the second row and first column:

d = 5*3 + 4*4 = 15 + 16 = 31

e = 5*2 + 4*6 = 10 + 24 = 34

f = 5*5 + 4*(-2) = 25 – 8 = 17

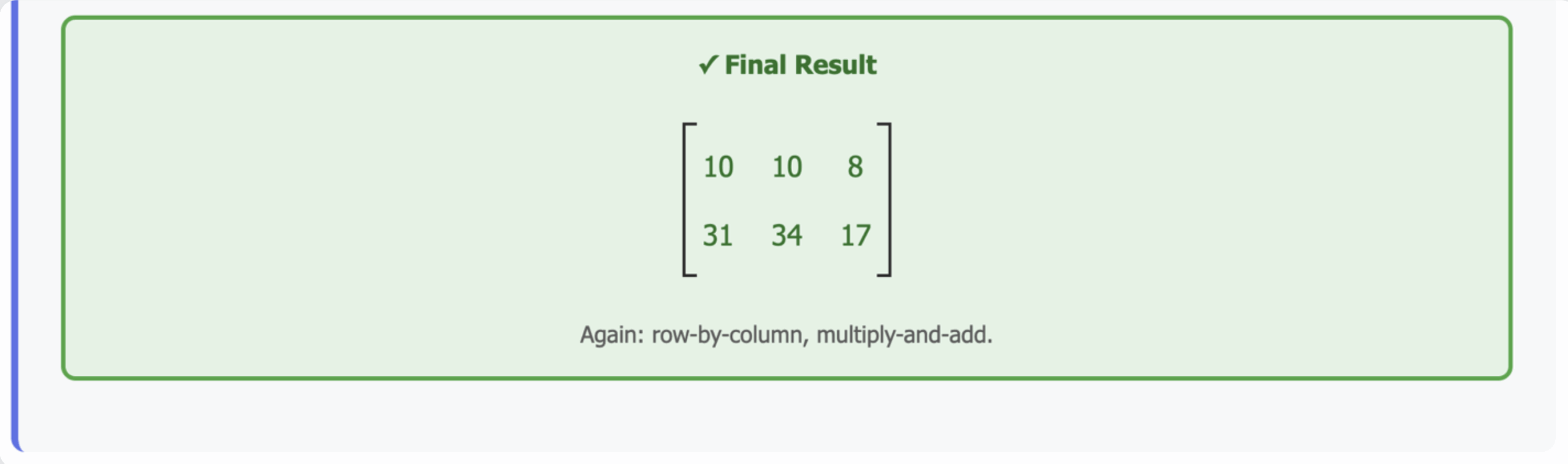

So the final result is:

Again: row-by-column, multiply-and-add.

Why This Matters

It may feel like we’re just crunching numbers, but this is the math behind how neural networks operate.

- When your model processes an image or a sentence, it’s multiplying huge matrices.

- When it updates weights during training, it’s multiplying matrices again.

- Even when it outputs a single probability (like “this is a cat with 92% confidence”), that’s the result of collapsing matrices into smaller ones.

Every step of deep learning relies on these multiplication rules.

Practice for Intuition

The best way to make this stick is to practice. Try multiplying these matrices on your own (keep the shapes in mind before calculating).

- (2 × 2) × (2 × 1) – 5 exercises

- (2 × 2) × (2 × 2) – 5 exercises

- Mixed shapes (e.g. (3 × 4) × (4 × 3), (4 × 4) × (4 × 4)) – 10 exercises

Work through them until you can “see” the shapes and even predict the result’s dimensions before doing any math. That intuition is what you’ll rely on when building AI models.

Takeaway

Matrix multiplication is not optional background knowledge—it’s the core operation of AI. Get comfortable with shapes, rules, and calculations, and you’ll be ready for everything from recommendation systems to large language models.

In the next article, we’ll use this foundation to show how matrix multiplication collapses and transforms representations—a critical step in moving from raw data to meaningful AI outputs.

Date

November 2, 2025